I've been spending the past 3 months doing a full review of the TLS landscape. I plan on publishing it very soon (just need to polish the doc), and in the meantime, here are a few pointers for configuring state-of-the-art TLS on Nginx.

Note for Apache users, you might want to check out kang's blog post on the matter. It's quite similar to what I'm going to talk about here, but with proper Apache config parameter.

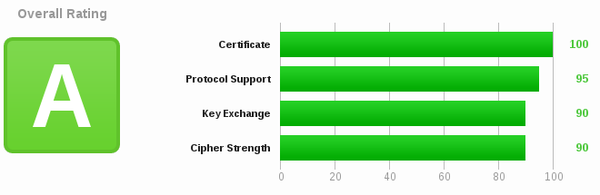

SSLLabs provides a good way to test the quality of your SSL configuration. Click the image above to view the results for jve.linuxwall.info.

SSLLabs provides a good way to test the quality of your SSL configuration. Click the image above to view the results for jve.linuxwall.info.

StartSSL free class1 certificate

For a personal site, StartSSL.com provides free certificates that are largely sufficient. Head over there and get your own cert, the process is rather straighforward and well explained on their site.

Building Nginx

This blog runs on a version of Debian that's not bleeding edge (as one would expect Debian to be). The version of OpenSSL is getting old, and so is Nginx. I had to build Nginx from source to get support for the latest ECC ciphers and OCSP stapling.

To build Nginx from source, you will need a copy of the PCRE and OpenSSL libraries:

- PCRE can be found here: ftp://ftp.csx.cam.ac.uk/pub/software/programming/pcre/

- OpenSSL can be found here: http://www.openssl.org/source/

Decompress both libraries next to the Nginx source code:

julien@sachiel:~/nginx_openssl$ ls build_static_nginx.sh nginx openssl-1.0.1e pcre-8.33

The script build_static_nginx.sh takes care of the rest. It should work out of the box, but you might have to edit the paths if you have different versions of the libraries. I builds a static version of OpenSSL into Nginx, so you don't have to install the openssl libs afterward.

#!/usr/bin/env bash

export BPATH=$(pwd)

export STATICLIBSSL="$BPATH/staticlibssl"

#-- Build static openssl

cd $BPATH/openssl-1.0.1e

rm -rf "$STATICLIBSSL"

mkdir "$STATICLIBSSL"

make clean

./config --prefix=$STATICLIBSSL no-shared enable-ec_nistp_64_gcc_128 \

&& make depend \

&& make \

&& make install_sw

#-- Build nginx

hg clone http://hg.nginx.org/nginx

cd $BPATH/nginx

mkdir -p $BPATH/opt/nginx

hg pull

./auto/configure --with-cc-opt="-I $STATICLIBSSL/include -I/usr/include" \

--with-ld-opt="-L $STATICLIBSSL/lib -Wl,-rpath -lssl -lcrypto -ldl -lz" \

--prefix=$BPATH/opt/nginx \

--with-pcre=$BPATH/pcre-8.33 \

--with-http_ssl_module \

--with-http_spdy_module \

--with-file-aio \

--with-ipv6 \

--with-http_gzip_static_module \

--with-http_stub_status_module \

--without-mail_pop3_module \

--without-mail_smtp_module \

--without-mail_imap_module \

&& make && make install

NGINXBIN=$BPATH/opt/nginx/sbin/nginx

if [ -x $NGINXBIN ]; then

$NGINXBIN -V

echo -e "\nNginx binary build in $BPATH/opt/nginx/sbin/nginx\n"

fi

Server Name Identification

SNI is useful if you plan on having multiple SSL certs on the same IP address. It hads an extension to the TLS handshake that lets the client announce the hostname it wants to reach in the CLIENT HELLO. This announcement is then used by the web server to select the certificate to send back in the SERVER HELLO.

Support for SNI is built into recent versions of nginx. Use nginx -V to check:

# /opt/nginx -V ... TLS SNI support enabled ...

Step by step Nginx configuration

The full configuration is at the end of this post. Head over there directly if you're not interested in the details.

ssl_certificate

This parameter points to file that contains the server and intermediate certificates, concatenated together. Nginx loads that file and sends its content in the SERVER HELLO message during the handshake.

ssl_certificate_key

This is the path to the private key.

ssl_dhparam

When DHE ciphers are used, a prime number is shared between server and client to perform the Diffie-Hellman Key Exchange. I won't get into the details of Perfect Forward Secrecy here, but do know that the larger the prime is, the better the security. Nginx lets you specify the prime number you want the server to send to the client in the ssl_dhparam directive. The prime number is sent by the server to the client in the Server Key Exchange message of the handshake. To generate the dhparam, use openssl:

$ openssl dhparam -rand – 4096

A word of warning though, it appears that Java 6 does not support dhparam larger than 1024 bits. Clients that use Java 6 won't be able to connect to your site if you use a larger dhparam. (there might be issues with other libraries as well, I only know about the java one).

ssl_session_timeout

When a client connects multiple time to a server, the server uses session caching to accelerate the subsequent handshakes, effectively reusing the session key generated in the first handshake multiple times. This is called session resumption. This parameter sets the session timeout to 5 minutes, meaning that the session key will be deleted from the cache if not used for 5 minutes.

ssl_session_cache

The session cache is a file on disk that contains all the session keys. There are obvious security issues with having sessions stored on disk, so make sure that your OS level security is appropriate. This parameter defines a shared cache of a max size of 50MB.

ssl_protocols

List the versions of TLS you wish to support. It's pretty much safe to disable SSLv3 these days, but TLSv1 is still required by a bunch of clients. Remember that clients are not only web browsers, but also libraries that might be used to crawl your site.

ssl_ciphers

The ciphersuite is truly the core of an SSL configuration. Mine is very long, and I spent a ridiculous amount of time researching it. I won't get into the details of its construction here, as I'll be writing more on this in the next few weeks.

ssl_prefer_server_ciphers

This parameter force nginx to pick the preferred cipher from its own ciphersuite, as opposed to using the one preferred by the client. This is an important option since most clients have unsafe or outdated preferences, and you'll most likely provide better security by enforcing a strong ciphersuite server-side.

HTTP Strict Transport Security

HSTS is a HTTP header that tells clients to connect to the site using HTTPS only. It enforces security, by telling clients that any HTTP URL to a given site should be ignored. The directive is cached on the client size for the duration of max-age. In this case, 182 days.

ssl_stapling

When connecting to a server, clients should verify the validity of the server certificate using either a Certificate Revocation List (CRL), or an Online Certificate Status Protocol (OCSP) record. The problem with CRL is that the lists have grown huge and take forever to download. OCSP is much more lightweight, as only one record is retrieved at a time. But the side effect is that OCSP requests must be made to a 3rd party OCSP responder when connecting to a server.

The solution is to allow the server to send the OCSP record during the TLS handshake, therefore bypassing the OCSP responder. This mechanism saves a roundtrip between the client and the OCSP responder, and is called OCSP Stapling.

Nginx supports OCSP stapling in two modes. The OCSP file can be downloaded and made available to nginx, or nginx itself can retrieve the OCSP record and cache it. We use the second mode in the configuration below.

The location of the OCSP responder is taken from the Authority Information Access field of the signed certificate:

Authority Information Access:

OCSP - URI:http://ocsp.startssl.com/sub/class1/server/ca

ssl_stapling_verify

Nginx has the ability to verify the OCSP record before caching it. But to enable it, a list of trusted certificate must be available in the ssl_trusted_certificate parameter.

ssl_trusted_certificate

This is a path to a file where CA certificates are concatenated. For ssl_stapling_verify to work, this file must contain the Root CA cert and the Intermediate CA certificates. In the case of StartSSL, the Root CA and Intermediate I use are here: http://jve.linuxwall.info/ressources/code/startssl_trust_chain.txt

resolver

Nginx needs a DNS resolver to obtain the IP address of the OCSP responder. In this example, I use Google's.

Full configuration

The full configuration is below, feel free to copy and paste it, and check your error logs if something fails to work.

server {

listen 443;

ssl on;

# certs sent to the client in SERVER HELLO are concatenated in ssl_certificate

ssl_certificate /path/to/signed_cert_plus_intermediates;

ssl_certificate_key /path/to/private_key;

# Diffie-Hellman parameter for DHE ciphersuites, recommended 2048 bits

ssl_dhparam /path/to/dhparam.pem;

ssl_session_timeout 5m;

ssl_session_cache shared:NginxCache123:50m;

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

ssl_ciphers 'ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-AES256-GCM-SHA384:kEDH+AESGCM:ECDHE-RSA-AES128-SHA256:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA:ECDHE-ECDSA-AES128-SHA:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA:ECDHE-ECDSA-AES256-SHA:DHE-RSA-AES128-SHA256:DHE-RSA-AES128-SHA:DHE-RSA-AES256-SHA256:DHE-DSS-AES256-SHA:AES128-GCM-SHA256:AES256-GCM-SHA384:ECDHE-RSA-RC4-SHA:ECDHE-ECDSA-RC4-SHA:RC4-SHA:HIGH:!aNULL:!eNULL:!EXPORT:!DES:!3DES:!MD5:!PSK';

ssl_prefer_server_ciphers on;

# Enable this if your want HSTS (recommended, but be careful)

add_header Strict-Transport-Security max-age=15768000;

# OCSP Stapling ---

# fetch OCSP records from URL in ssl_certificate and cache them

ssl_stapling on;

ssl_stapling_verify on;

## verify chain of trust of OCSP response using Root CA and Intermediate certs

ssl_trusted_certificate /path/to/root_CA_cert_plus_intermediates;

resolver 8.8.8.8;

<insert the rest of your server configuration here>

}